Sadly, though, I can’t get GTK to properly compile under macOS Tahoe (w/Apple Silicon). The problem is not with your impeccable Go code, which is a precious gem  — it’s just C/C++ Dependency Hell™,

— it’s just C/C++ Dependency Hell™,

It compiles fine under several variants of Linux I have around here. Unfortunately, none of them has a display — so I can’t test it out

If you are *really* interested in the whole story...

I’m a Mac user, and I’m on Apple Silicon and all that. I also have Rosetta 2 turned off (= Apple’s Intel emulation for ARM64 chips, for those who don’t know), deliberately so, to make sure I can rule out in advance what won’t be working in 2028, when Apple will discontinue any form of Intel support.

And, deliberately, I’m using MacPorts as my preferred package manager for macOS. These days, Homebrew is far more popular, but… let’s say that their maintainers are far too opinionated for my tastes.

That said…

We all know how cool it is to be able to call any C library from Go, usually effortlessly, which means that you can get access to an infinite amount of awesome libraries out there with just a thin Go wrapper on top (or, well, those of us who happen to love Go are used to that).

There is just one catch.

While Go has an amazing track record of being uncannily compatible with essentially everything out there, and (modern) packaging — so long as package maintainers aren’t changing the URLs of their packages too often (which you can — but you probably shouldn’t) — essentially means a “zero broken dependencies” environment (indeed, one of the main reasons for Google having ‘invented’ it in the first place), that’s only true for… well, native Go.

Start adding non-Go libraries, and… well, there goes your Dependency Heaven™ down the drain.

More specifically, in order to make sure you can distribute a Go binary to any computer and don’t worry much if it has everything installed, Go is mostly statically built. The theory is simple: in the days when disk space was at a premium, shared libraries made a lot of sense to keep binaries tiny and not waste unnecessary disk space. The drawback, of course, is that you’d have to make sure you kept all those dynamically-linked libraries in perfect sync — that meant dealing with Dependency Hell™.

Therefore, by default, Go builds everything statically. Compile it, distribute it, never worry about a single missing file ever again. Embed your assets (files, icons, etc.) into the binary for extra ease of mind — no extraneous files, just a big, fat binary. In essence, a Go binary is a Docker-in-a-file kind of thing; it contains everything you need to run it (and it’s not a coincidence that Google released Docker next, generalising the concept in Go to a virtual-machine-that-runs-natively-all-packed-in-a-single-package).

You can force Go to compile dynamically as well, of course; that’s the approach taken to bring Go into embedded devices, where everything — memory, CPU, ‘disk’ space — is reduced to the essentials, and the more you can share among applications, the better.

Then again, most embedded devices are not upgraded partially (there are many exceptions, of course, Raspberry Pi being perhaps the best known): they’re upgraded integrally in one go. This guarantees that no ‘dangling’ library dependencies are present — you get the whole system upgrade in a single package.

Therefore, there are two schools of thought regarding combining C/C++ and Go together, since both have, well, misaligned philosophies: C/C++ links dynamically by default; Go statically. Which one should you pick? Or maybe even… both? (Aye, you can mix and match the two approaches.)

As it happens, usually (with few exceptions that I’m aware of), Go’s intermediate compiling steps using CGo (the ‘bridge’ between the two) will grab whatever dynamic libraries are pre-installed in the system. While the Go packages may be dynamically linked as well, it’s more usual to keep them statically linked.

The consequence is that, if you upgrade the C libraries, your Go application(s) may continue to work… or not at all. (The latter is more frequent than the former.)

To make matters even worse, it’s usual that developers of Go ‘thin wrappers’ over extensive C libraries will pick a ‘stable’ version — i.e., the one their operating system has installed by default — and adapt everything on the Go side to fit that specific version’s intrinsic oddities and quirks.

Then you ship a Go application from, say, Ubuntu Linux to CentOS, and, to your utter surprise, ‘nothing works’, even if both have the same libraries installed.

Aye — but do they have the exact version numbers?

Or perhaps Ubuntu has a ‘developer’ version while CentOS relies on a ‘stable’ version instead? And then, of course, you’ve got Long Term Support versions — which remain ‘frozen in time’ (except for security patches and extreme bug fixes) for several years. It’s great to base one’s Go wrapper code on one of these libraries, believing that it’s as close as you can get to having stable, predictable library dependency trees — until, of course, you don’t.

Throw in different architectures — ARM64 vs. Intel x86_64 — or different operating systems — Linux vs. FreeBSD vs. macOS vs.… Windows — and things become exponentially more confusing to master.

Not, mind you, because of Go. Go is perfectly agnostic — you can run and compile it on pretty much anything, and it will look and work essentially in the same way. I’d safely guess that over 99% of the Go code is totally platform-independent. And even those things that aren’t will be mostly low-level (e.g., acquiring handles from the operating system); allowing the thin layer immediately above that to be absolutely platform-independent.

Unfortunately, that’s not how C works. C makes the reverse assumption: everything is different from platform to platform, from operating system to operating system, even from an individual machine to the next. To cope with all of that mess, C (and C++) rely on a very complex mechanism of generating ‘universal’ configuration files that will help the compiler in figuring out what exactly is available and what is not, and adapt to it (e.g., employing the equivalent of ‘shims’ and ‘polyfills’ to deal with missing features). There are a plethora of tools to deal with that, and I’m sure I cannot name but a few (autoconf, CMake, meson/ninja, are a few of the most common ones) — there must be zillions out there. Even Apple and Microsoft have their own (mutually incompatible, of course).

At a higher level, this also remains true. There are a gazillion graphic libraries implemented in C and C++. The most popular ones tend to be cross-platform, and that also means they need exhaustive descriptions on how to compile all the required libraries — until, at a much higher level, programmers can rely upon well-documented APIs to interface with their GUIs, and ‘the same code runs anywhere’. This is true for everything from the humble wxWidgets to Qt to GTK to KDE, to, say, the Unity and Unreal 3D Engines. All ship with extensive tools to deal with these complexities ‘under the hood’ and, eventually, present the high-level programmer with a clean, predictable environment, upon which they can develop their tools, disregarding what happens beneath and letting the tools figure it out properly.

That’s all very nice, of course, if everything were ‘frozen in time’, and if developers would stick to the One Graphics Library To Rule Them All™ principle (well, both Apple and Microsoft strongly encourage their own variant, of course).

In practice, it means struggling with all of them, hoping against hope that they don’t start cannibalising each other, ‘imposing’ versions and configurations that work well for some cases, but are absolutely useless in others (and, naturally, vice-versa).

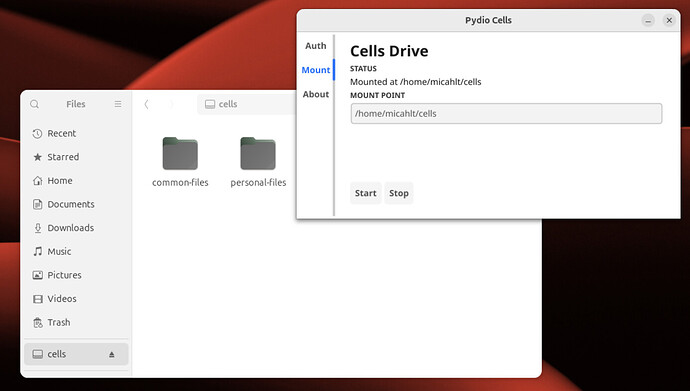

Needless to say, this is exactly what happens with @micahit’s awesome cells-fuse package: it compiles like a charm on a few variants of Linux that I’ve tested (unfortunately, all are headless servers, so I can’t ‘see’ the GUI, I can only say that I would ‘see’ something if, well, I had something to view with).

But under macOS — forget it, at least under my specific configuration: Apple Silicon (ARM64) + macOS Tahoe + MacPorts. There is simply no way to get it all working together, and, believe me, I’ve tried.

In fact, for the past 24 hours, I’ve been busy figuring out how to get GTK4 working on my specific configuration, at least to the point where the Go libraries relying upon GTK4 are happy with what they ‘see’ down under. Unfortunately, this is not obvious at all — too many things are broken, making too many ‘wrong assumptions’ on what ought to be available (but which isn’t) and how to add the ‘next’ layer on top of the previous one.

Alas, so far, I haven’t been lucky — it took me half an eternity to ‘fix’ about a dozen very tricky dependencies, but… I merely scratched the surface of the iceberg that sunk the Titanic. There are layers and layers and layers of good C code to deal with — ‘it’s turtles all the way down’ — and I didn’t manage to ‘fix’ several of them.

Note that this is hardly the fault of cells-fuse: the choice of using GTK to get a portable, universal GUI that works on top of ‘anything’ is a very good one. GTK is what’s used by millions of users running Inkscape or FontForge — among thousands of other popular tools — under whatever operating system they might have.

At the end of the day, Go actually compiles cells-fuse, reinforcing the idea that nothing is ‘wrong’ with your code. It just misses a handful of variables from the ‘wrong’ version of the dynamic libraries linked together, dumping errors such as

./cells-fuse

dyld[94844]: Symbol not found: _g_desktop_app_info_get_filename

Referenced from: <58063F11-41B1-3B49-AAF7-1B5DB0B96E37> /opt/local/lib/libgtk-4.1.dylib

Expected in: <9642C08A-3729-3F8E-AAC9-184AAE163859> /opt/local/lib/libgio-2.0.0.dylib

Abort trap: 6 ./cells-fuse

```

Note that, as far as I know, you're **not** making use of *that* 'symbol' at all — but GTK is!

In my example above, I have spent plenty of time tweaking `libgtk-4.1` so that it compiles and runs without issues. Sadly, though, GTK requires a *lot* of libraries, most of them not even part of the *same* repository — which means going through each of them manually, bumping it to the 'correct' version, rebuild everything... and hope it works... if not, go to the next library in the list of dependencies... do the same... and so on, and so forth, until (theoretically), considering that there is just a *finite* number of libraries overall, *eventually* there will be an *end*.

But I've not been persistent enough to go until the end, of course.

### Enter Fyne

All graphic libraries, to the best of my knowledge, are, at some point, bound to a C library that ties into the operating system. The difference is mostly how 'deep' you need to go until you reach that layer.

GTK, as far as I can understand it, is relatively higher-level: it provides the shims to use the topmost layer of the library, and just thinly wraps the Go layer around it. This is, after all, the most common option. Unfortunately, it also means that there are a *lot* of C layers that need all to be in sync, dependency-wise, which is the nightmare described before.

A few steps deeper you hit on OpenGL (I suppose the same applies to Vulkan; I haven't checked). Here, you've stripped a lot of the upper layers away, and get closer to the hardware. There are plenty of OpenGL C libraries — half a dozen are the most popular. The so-called 'official Go package' for OpenGL does something clever: first, it figures out which library you've got. Then, because all OpenGL libraries come with a XML description of all functions and parameters they support (and these *must* be in sync with the library itself!), what these clever guys have done was the following: extract the XML from a well-known location and pre-generate the Go shims that will call these functions directly from C. Therefore, if you ever change libraries — or get an updated version of the library you happen to have installed — all you need is to re-generate the shims again and compile the Go code against the 'new' library. The End. There is *nothing* to change on the Go programming itself, which will only call Go functions — standardised at a level that allows Go programmers to have some confidence that *these* functions will not abruptly change their interface. What happens at the 'shim layer' is fully automated (yay for `go generate`! :grinning_face_with_smiling_eyes:) — so even when cross-compiling to a different platform, which may or may not have the 'correct' set of libraries, that will be irrelevant: the OpenGL Go package will adapt its 'shim layer' to whatever is installed, and the rest of the code remains exactly as it is. Of course, you will need at least to have *a* developer's version of OpenGL properly installed on the target platform *and* have a fully functional C/C++ compiler. But there should be no surprises.

Alas, as good as it sounds, the OpenGL way has two major drawbacks:

1. It's really programming at a much lower level, which means you don't get the elegance of writing neat, polished, simple, very-high-level code. You're really shuffling bits to buffers and intersecting rectangles and so forth. Obviously, you can *do* your own 'GUI construction SDK' using OpenGL primitives — I'm actually aware of an insane company which has been doing just that for the past 20 years, the fools... — but it's truly a nightmare. OpenGL is flexible, and you get full access to all the magic that the graphics card can give, but it's necessarily complex at the low level.

2. Because different OpenGL libraries have different ways of addressing the hardware and *may* present different APIs to the caller, it means that the OpenGL Go meta-shim-layer-approach, by necessity, will use the largest subset that is supported by *all* of the libraries. This, in turn, means that Go's OpenGL access will be the one with the *least* options (or, at best, have as many functions as the smallest of all the possible libraries).

But there are other solutions out there!

The one that captured my interest most was [**Fyne**](https://fyne.io/). This is a GUI application environment that comes as close as possible to be 'all native'. Of course it isn't (even though they sort of claim that you don't need to use CGo on Windows and are trying to get their solution/trick/hack to be used by the maintainers of the official Go port of OpenGL), but... it's perhaps the 'best' solution out there, for a given value of 'best', of course.

OpenGL, as it goes, is *huge* — because it targets *everything* you can ask a graphics card to do, namely, complex parallel calculations to render 3D images in real time with shaders and whatnot. But to do a GUI you don't need any of that — you're just handling 2D rectangles (and a few odd polynomial shapes, all of which are in 2D). That means that only a subset of OpenGL is actually being actively used. The Fyne developers cherry-picked all the functions they *really* need, and packed those together in a mini-OpenGL library, so to speak: it remains in sync with the official Go port, but it's stripped down to the bare-bone essentials. The bulk of the code is offering a very abstract, high-level layer of functionality that is as simple to learn as GTK, wxWidgets, or similar approaches. In fact, there is *almost* a 1:1 correspondence between your GTK code and the Fyne code; I believe that even I could do a 'translation', so to speak, especially because you restrict the GUI bits to just two Go source files, one of which is just the initialisation on `main()`. There is still some work to be done — like translating human languages, you can do it best if you happen to be well-versed in *both*, and I've never toyed around with GTK before — but it's not far-fetched to believe that this can be accomplished.

Unlike, say, the GTK or Qt ports, you do *not* need to have *any* OpenGL library pre-installed (or even worry about it at all): the code is self-contained, so to speak, adding C code snippets (and even some assembly!) on demand, as needed, to interface with whatever graphics card there is underneath all layers. The dependency on C is kept to the barest of the barest minimums — thus the claim that they could avoid CGo on Windows altogether (which, as said, I cannot confirm).

It means that they had little to worry about, say, porting Fyne to WASM: it compiles out of the box, since Go does that anyway, and you don't need to worry about low-level interfacing with device drivers and such. WASM comes with its own set of rules, WebGL and so forth, and you can call all of that under Go directly.

As a bonus, the Fyne team has developed a great, universal packager for 'all' platforms out there, and, aye, that includes mobile platforms as well. The only thing I didn't see (yet) is support for, say, game consoles, but I suppose that their focus is, for now, non-gaming environments — just plain GUIs.

Although Fyne has been around for several years, it shows that it's not a very polished product — yet. For instance, it's not (yet) a Qt replacement, to put it bluntly. There's already have a rapid development tool ([Apptrix](https://apptrix.ai/)), which is currently proprietary (it will be released as open source 'soon'), and it only supports the 'standard' Fyne widget set, which is really bare-bones — for instance, while it includes a TreeView, it does *not* include a file browser. There are plenty of volunteers contributing code, though, and (naturally enough) a file browser is one of those; but, as of Apptrix 1.0.0, it's not supported yet.

You will notice that the Apptrix guys don't even mention Go on their website. Their idea is to have developers dragging and dropping widgets around the screen, press a button, and generate all the Go code using Fyne to develop ready-made, packaged applications for all possible platforms — without writing any code at all. This is partially made possible because Fyne can directly access a JSON file describing the whole interface, and auto-generate all the necessary stubs to get working — which is exactly what you need for a rapid prototyping tool that relies on visual programming to accomplish most of the magic.

But, naturally, you can do all the programming manually (as you did).

What particularly impressed me was, in fact, the outstanding WASM support: on the same hardware, visually, there is *zero* difference between something running in a browser or something running from a natively-compiled application. Naturally, there *is* a performance hit. But just from a visual point of view, it's *identical* (there are a few exceptions, I think, which haven't been ported yet).

Why does that matter? Well, these days, we're plagued with the dichotomy of writing so-called backend code using a set of programming languages, and then worry about a completely different environment for the frontend code — which, these days, is almost always either a React Native or an Electron application.

Fyne *could* be a valid alternative, at least in the Go ecosystem. With Fyne, the main focus is on the actual application code, while the GUI-specific issues become an afterthought — you just write the whole once, using a single programming language (Go), and deploy it anywhere, just like Electron or React Native promise.